Minor update for you all

My desktop rig has a 2tb hdd in it that I use for media storage to serve to my network. The issue I am running into more and more is the NTFS file system installed on it by Windows (back when I still used that). Now that I’ve switched to Linux there are some neat alternatives out there, like btrfs.

So I’m giving it a shot.

Since I’m running Arch Linux, and even if you don’t, the Arch wiki is incredibly helpful. Seriously, go check it out. I’ll wait.

Pretty cool, yeah?

I’m currently running this backup job with rsync to clone my directory structure into an ext4 file system, for increased reliability and speed. This is backing up to a portable 1tb external drive I have laying around. After that’s done I’m going to wipe my NTFS drive and start from scratch with either ZFS or btrfs depending on support and availability, I’m still exploring my options there. Then I’ll copy the data back over and enjoy better reliability and speed, while also having protection from bit rot!

The process to do that was actually dead simple. First I used this guide to refresh myself on some of the rsync commands.

I had to make a mount point for my backup drive.

$ sudo mkdir -p /dev/backupdrive

Then format the drive to ext4

$ sudo mkfs.ext4 /dev/sdg

And Mounted it and made sure it stays mounted by adding it to my /etc/fstab file.

$ sudo mount -t ext4 /dev/sdg /media/backupdrive

Then I ran this command:

$ sudo rsync -r -z –complete –dryrun /media/hdd1/ /media/backupdrive/ > testrun.txt

$ less testrun.txt

to get a sense for the output of the command I’m about to run. With a backup job like this it’s important to do a dry run first so you don’t accidentally overlook something very important and render your data unreadable.

Then I removed the –dryrun option and let it go.

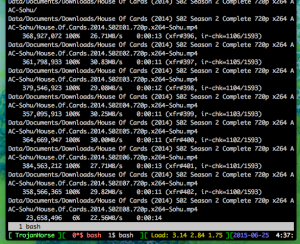

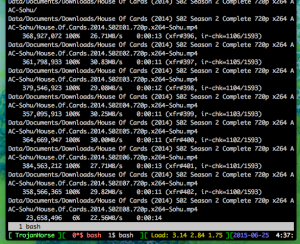

Here it is copying the files over. Of note is the -z option which compresses the files before sending them. Since the data is all being transferred locally I probably could have skipped this, but I am moving a lot of large files and wanted to ensure they copy as fast as possible over the USB 2.0 interface. And given this machine has the processing power to do it, it’s definitely not hurting anything. Even though a lot of them are already compressed movie files and ISO files,

Running it this way also acts as a checksum method, if the integrity of the archived file is damaged in transit, it won’t unpack to become the same file and rsync can then correct the error.

More updates to come! Stay tuned! Next time I’ll be showing you the process of putting everything back!